The post How to use OpenVPN over an IP over ICMP tunnel (Hans) appeared first on nethack.

]]>The very first question may is why to tunnel IP over ICMP at all?

Sometimes you may find yourself in a situation where you have network, but no access to the Internet because of annoying restrictions. That f.ex. could be a proxy which requires authentication, a captive portal like in hotels or the ports you need simply are not open. As long you can ping hosts in the Internet you have the possibility to use Hans (IP over ICMP) to get around this restrictions. One problem that still could arise is that the local firewall implements some kind of ICMP flooding protection and your clients is getting blocked because Hans generates too much ICMP traffic (and it will definitely produce quite a lot of them).

IMPORTANT: Hans doesn’t do any encryption! That’s why i want to pipe my OpenVPN connection through it.

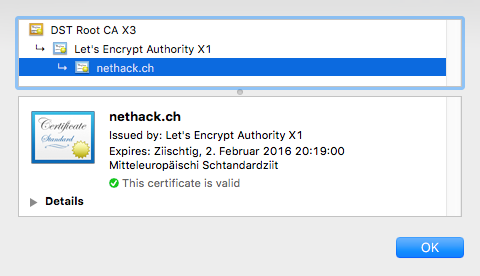

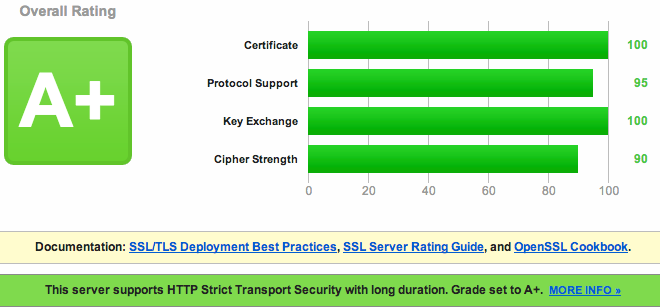

How to setup an OpenVPN server with certificates and Two-Factor Authentication you can find in my last post.

This post explains the additional steps required to make it work. So you may should have a look at the post first before continuing.

Installation

Client

On a Linux system you can compile the client the same way we do for the server in the next step. For OS X and Windows there’s a binary available on the Hans website: http://code.gerade.org/hans/ .

Server

Download the source, compile it and copy the binary:

wget https://netcologne.dl.sourceforge.net/project/hanstunnel/source/hans-1.0.tar.gz

tar xfz hans-1.0.tar.gz

cd hans-1.0

make

cp hans /usr/local/sbin/

Martin Hundebøll provided a Systemd script to start up the service at boot time, and i modified a bit to fit my needs.

Create the file /etc/systemd/system/hans.service and add this:

[Unit]

Description=ICMP Tunneling Daemon

After=syslog.target network.target

[Service]

Type=forking

EnvironmentFile=/etc/hans/hans.conf

Restart=on-abort

ExecStart=/usr/local/sbin/hans -s $HANS_IP -d $HANS_DEV -m $HANS_MTU -u $HANS_USER -p $HANS_PASS

[Install]

WantedBy=multi-user.target

Configuration

Hans server

Put the following content into /etc/hans/hans.conf :

HANS_IP=10.22.33.0

HANS_DEV=tun99

HANS_USER=nobody

HANS_PASS=<a not to short password>

HANS_MTU=1500

HANS_IP: A subnet which is used within the tunnel for communication (make sure that it’s different from your existing OpenVPN subnets)

HANS_DEV: The tun device it should use. As i use lower tun numbers for OpenVPN i set it to tun99

Because the configuration contains the password in plain text, make it readable by root only:

chown root:root /etc/hans/hans.conf

chmod 600 /etc/hans/hans.conf

Now enable and start Hans:

systemctl enable hans

systemctl restart hans

Hans client

To start it up on my Mac i use this simple command:

sudo ./hans -c your.server.com -m 1500 -p <the password you defined on the server>

Now you should be able to ping the server side:

ping 10.22.33.1

OpenVPN Server

If your IP over ICMP tunnel is up, it’s time to configure OpenVPN.

It turned out that it was not as easy than i thought first because there are two tunnels involved and they begin to interfere with each other if the routing is not set correctly.

DISCLAIMER: I don’t know if the solution below is the best, but it was the only one which made my setup work the way i want. If you found a better solution, please add a comment below.

I had to make a separate OpenVPN configuration which doesn’t forward everything through the tunnel because this killed the IP over ICMP tunnel. The only change to the original OpenVPN configuration i used in my last post was to comment out the line which pushes the default route to the client:

# push "redirect-gateway def1 bypass-dhcp"

NOTE: Make sure to have a different IP range, port and tun device configured as explained it the post mentioned above, as well as set all firewall rules (masquerading).

Fire up the new instance of the OpenVPN service and then the last thing we have to do is the client config.

OpenVPN client

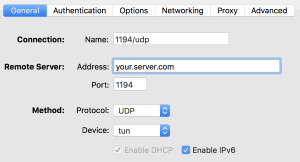

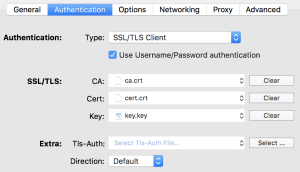

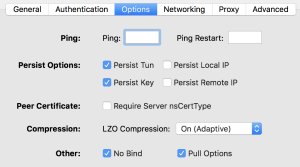

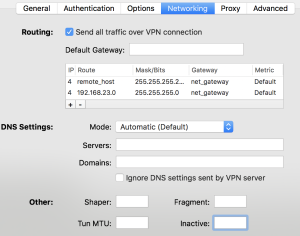

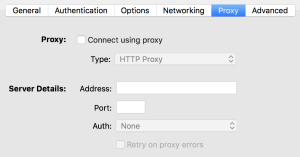

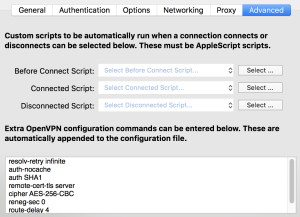

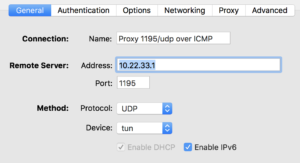

There are three options which are different from the configuration used for a normal OpenVPN connection. Please, again, have a look at the previous post for details.

The options you have to change are:

- IP/Port: 10.22.33.1 (The address of the IP over ICMP tunnel on the server side, and 1195 as port in my case)

- Tun MTU: For better performance, set the MTU of the OpenVPN connection to 1376 (or maybe even a bit lower)

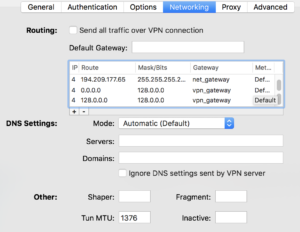

- Routes: In my Viscosity VPN client i can set additional routes directly. I had to add three of them:

- Route: <external IP of Hans server>/32, Gateway: net_gateway (which is a variable to the local gateway in Viscosity)

- Route: 0.0.0.0/1, Gateway: vpn_gateway (which is a variable to the VPN gateway address in Viscosity)

- Route: 128.0.0.0/1, Gateway: vpn_gateway

The last two routes are a bit special and a little workaround. Let me explain:

If i overwrite the default route in any way i tried, it broke the IP over ICMP tunnel. So i created two /1 networks which cover all IPv4 adresses, and because they are smaller than the default route (0.0.0.0/0) they have a higher priority. I’m sure there’s a better and cleaner way to do it, but i wasn’t able to find out how.

Now you should be able to connect your OpenVPN tunnel through the IP over ICMP tunnel. I could achieve up to 5 MBit/s which isn’t very much, but not too shabby if the other option would be to have no Internet at all or to have to pay for it…

The post How to use OpenVPN over an IP over ICMP tunnel (Hans) appeared first on nethack.

]]> )

)